Back when I was in college, the internet was in its inception; information was hard to find online and even less reliable than it is today. If I wanted to know basic facts (“What is the fundamental attribution error?”; “What are the stages of meiosis?”; “What was the Treaty of Westphalia?”), I had to either ask an expert or head to the library for a gruelling search. Information was valuable, which is why so much of college was focused on acquiring information.

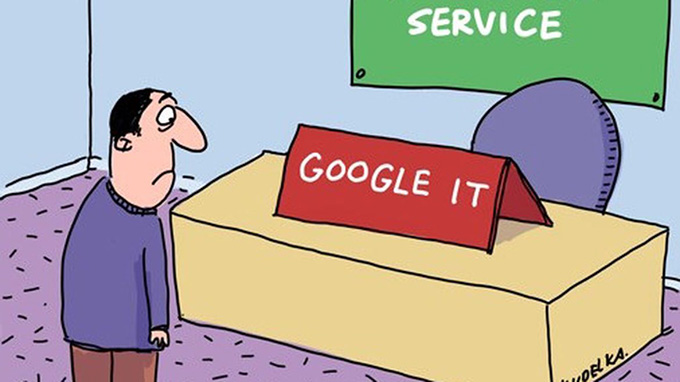

Today, things are very different. Students have all sorts of cognition-enhancing technologies, from Google to Grammarly. Basic facts are easy to find online, and with smartphones, students typically have access to the bulk of human knowledge anywhere and at any time.

Now, the problem isn’t information scarcity, but rather information overload. The challenge is no longer in finding information, but in evaluating information, synthesising information, applying information and creating new knowledge – what some psychologists refer to as cognitive skills or habits of thinking.

And yet so much of higher education is still content-based – many courses are still focused on teaching students to memorise large bodies of facts rather than how to think judiciously about those facts, which can easily be acquired online.

Many faculty believe they are already teaching students to think rather than to memorise, and in fairness, some probably are. But a brief look at any college course catalogue will quickly reveal that most courses are described and organised around the content being conveyed (“The molecular basis of addiction”; “19th-century Russian masterpieces”; “Nuclear regulatory policy” and so on) rather than cognitive skills and habits of thinking that the course hopes to instil.

Still, faculty often say that their lectures, labs and discussion sections not only convey facts but also discuss the evidence for and implications of those facts. Indeed, many classes are structured around the premise that students, by virtue of observing faculty engaging in the types of analysis, synthesis and application that the instructor hopes to convey, will naturally develop those skills themselves − a highly questionable assumption given the psychological literature on transfer and generalisability of knowledge and skills.

Even in classes that explicitly cover how to engage in critical thinking, much of what students are tested on would be classified as part of the lowest level of Bloom’s taxonomy: factual knowledge and remembering. When professors test facts and minutiae, it encourages students to focus on learning facts and minutiae to the exclusion of the higher cognitive skills that faculty are trying to teach. Resolving these problems will involve overhauling how we think about course design and student assessment.

First, courses and curricula need to be designed around the notion of explicitly teaching the kind of thinking we want to encourage, rather than assuming that students will pick up those cognitive skills through incidental exposure.

That includes creating courses that specifically signal the nature of the skills that students are expected to learn (for example, including courses in the catalogue such as “Computer-assisted literature review”; “Analysing externalities”; “Engaging in civil discourse with somebody you disagree with”) and aligning syllabi and lessons with those goals.

Second, knowing that students will focus on what is tested, we need to develop assessments that test the skills we want students to learn. One way to do this is to use open book (and open internet) exams. If faculty know that students will have access to Google during the exam, there is little point in asking factual questions with easily searchable answers. Instead, questions will naturally focus on how to evaluate, synthesise or apply information.

An example question might be: “Find three thinktank reports that provide different estimates of how many jobs will be lost if the minimum wage is increased to $15 an hour and explain how the different data or assumptions used by the three reports cause them to come to different conclusions.”

Alternatively, we might design practicum assessments in which students are forced to actually use skills rather than regurgitate descriptions of how to use those skills (for example, “use the techniques learned in your chemistry lab to identify a mystery compound” or “use the techniques of your human-computer interaction class to design a graphical user interface (GUI) for a banking website”).

Such assessment strategies are more difficult and time-consuming to develop and grade but are necessary to promote the types of learning that are essential for the internet age.

The needs of an educated society are changing, and higher education needs to adapt to be responsive to those changes. Universities must invest the resources needed to develop new learning priorities and techniques to deliver on those priorities in light of advancing technology, lest we become obsolete in a world of information overload.

Author Bio: Danny Oppenheimer is a Professor at Carnegie Mellon University jointly appointed in psychology and decision sciences. He studies judgement, decision-making, metacognition, learning and causal reasoning.