COVID-19 lockdowns were a huge disruption for Australian universities. With students unable to come to campus, many universities turned to “online proctoring solutions” to monitor students during exam time.

Many of these systems rely on automated facial recognition or detection, often combined with human video-monitoring of students’ homes, leading to concerns about bias, inaccuracy and intrusiveness. The rapid rollout of these systems led to student protests in Australia and elsewhere.

We interviewed students, activists, tutors, academics, and managerial and technical staff at several Australian universities to explore the effect and experience of online proctoring. We found concerns from staff around the extra workload involved in maintaining “buggy” proprietary systems. Students, meanwhile, were worried about the invasiveness of the technology, and nervous at the prospect of platform glitches disrupting exams.

Over time, students have become more tolerant of online proctoring (or perhaps resigned to it). This habituation to the technology might serve as a lesson for how emerging uses of biometric surveillance are being incorporated into daily life — as well as how they need to be controlled and regulated.

The EdTech boom

The pandemic presented a golden opportunity for the mostly US-based education technology (or “EdTech”) industry. For these players, last year was a bonanza. Two months into the pandemic, the chief executive of “comprehensive learning integrity program” Proctorio told the Washington Post:

It’s insanity. I shouldn’t be happy. I know a lot of people aren’t doing so well right now, but for us — I can’t even explain it […] We’ll probably increase our value by four to five times just this year.

At least 24 universities in Australia and New Zealand used some sort of online proctoring tool last year. In some cases this simply involved the relatively low-tech use of Zoom, but many universities opted for proctoring platforms such as Proctorio, Examity or the most popular choice, ProctorU.

How online proctoring works

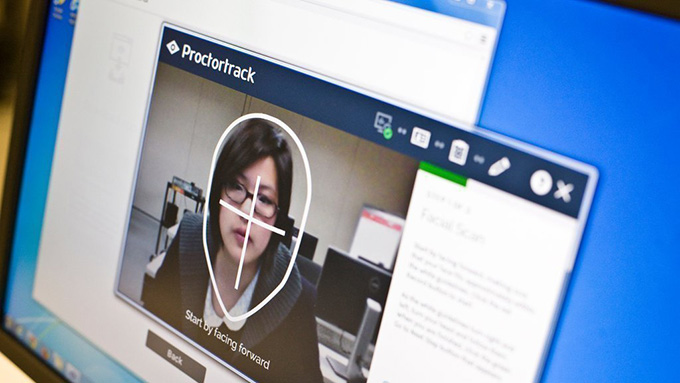

Typically, proctoring platforms use a combination of “human” monitoring and AI monitoring to monitor students’ conduct during exams.

Students take their exams before the unblinking eye of their laptop camera. The AI monitoring uses tools like face detection, gaze detection and keystroke biometrics to verify students’ identity and flag “suspicious” behaviour (such as looking around the room, or moving away from their desk).

Suspicious behaviour can be reviewed by a “live” remote proctor. This work is often outsourced to developing nations such as India and the Philippines, where remote proctors are reportedly paid around A$3.50 per hour.

It is also possible to use fully automated versions of such platforms — though as we found, even “fully” automated systems require a great deal of extra work for teaching staff.

Automation creates more work

Tutors we interviewed described the additional unseen work involved.

They had to write suitable assessment tasks, set up the proctoring parameters, and wade through post-exam reports to judge evidence of anomalies. Moreover, technical staff had to methodically review the content of every assessment to confirm it would be compatible with the software.

Even after all this work, exams were still troubled by glitches. One computer science tutor described the platforms as “totally, totally, buggy”. Staff have little direct control over how the platforms work, as the precise rules used to determine “suspicious” behaviour are tightly protected commercial secrets.

Problems with facial recognition

The emergence of online proctoring has been extremely controversial. Some see it as an invasion of students’ privacy based on an idea that students are inherently prone to cheating.

Critics have also argued the facial recognition tools these platforms depend on may be racially biased, and more likely to misrecognise people of colour. In the United States, facial recognition technologies have been banned outright in several cities.

The use of facial recognition is growing in Australia, with the federal government set to deploy the National Facial Biometric Matching Capability. However, debates around facial recognition’s impacts and implications have been much more muted here than overseas.

An initial wave of protests

The initial deployment of online proctoring nevertheless prompted a storm of protest on Australian campuses. Student protest leaders we interviewed found students considered remote proctoring an unacceptable invasion of privacy, and there was anxiety around the prospect of glitches affecting exam performance.

Even students who would not normally get involved in student politics were driven to protest. As one student organiser told us:

a lot of students are pretty apathetic to that kind of stuff [but] the response in terms of [the use of online proctoring] was a lot more visceral.

In the wake of these protests, online proctoring was limited or entirely removed in some university subjects.

‘That whole Big Brother scenario’

However, once exams had actually taken place using the platforms, some students became more “jaded” or “resigned” towards use of the technology. Some students we interviewed were even relatively positive about the convenience of taking exams from home. One student reflected:

convenience far outweighs anything [or] any sort of issue that could possibly come up […] it’s that whole Big Brother scenario, you sort of forget they’re watching you after a little bit.

Others felt the comfort and calmness of the home environment was favourable when compared to a busy and emotionally charged exam hall.

Despite the controversy and added work of online proctoring, university administrators we spoke to were confident the technology would continue to be used in Australian institutions. As one technical support officer put it, it is rare to “un-procure” technology:

once we start (with) any new technology, it’s hard to just step back completely, and not make that offering anymore, that’s not […] how these things work.

Will an emergency fix become normal?

Online exam proctoring was introduced as an “emergency fix” during the pandemic, and may well become more prevalent as universities continue to incorporate online learning in the post-pandemic world. Before we accept it as normal, we should make sure it actually improves students’ experience of learning.

The introduction of online exam proctoring systems raises serious issues, including the impact on student education, the extra work required to keep “buggy” systems working, and the commercialisation and outsourcing of key university infrastructure.

The use and regulation of these systems must be guided by educational “best practice” principles: imbued with genuine respect for “users”, a substantive set of ethics, morals and political intent, and a meaningful contribution to the quality of higher education.

Author Bios: Gavin JD Smith is a Associate Professor in Sociology at the Australian National University, Chris O’Neill is a Research fellow, Mark Andrejevic is Professor, School of Media, Film, and Journalism, Neil Selwyn is a Distinguished Research Professor and Xin Gu is a Lecturer in Communications and Media all at Monash University