Very few aspects of our lives escape numerical evaluation for comparative purposes. Humanity’s current challenge is to keep Covid-19’s reproduction number, or R0, as low as possible to prevent its spread.

This numerical fixation is certainly true of university research. Many measures of research output, quality and impact are used to guide recruitment, funding and promotion. But while it might seem odd in the midst of a viral pandemic to question this, I believe that now is exactly the right time.

There is no virtue in reducing rich complexity to banal metrics. Haydn wrote 107 symphonies and Beethoven produced only nine – yet while most of us can hum at least one of Beethoven’s, few can recall any of Haydn’s. That, in essence, is the problem with using numbers to measure the sole worth of something as complex as what emerges from the human imagination.

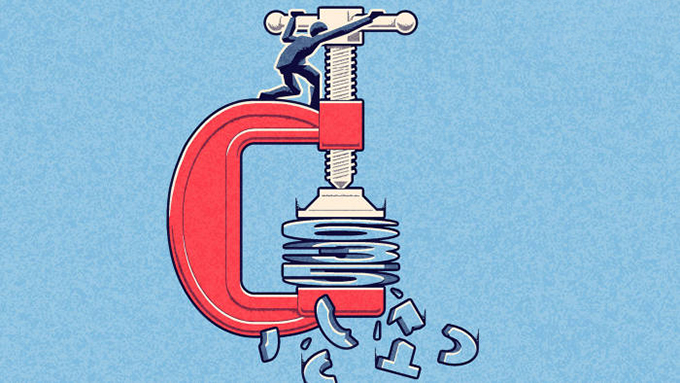

Unfortunately, measuring research quality is no different. Measures such as numbers of scholarly publications, the impact factor of the journals in which the papers are published and their accumulated citations are widely used indicators of academic excellence and impact. However, by themselves these “‘traditional”’ measures don’t capture the more subtle aspects of research quality, can trivialise deep scholarship, vary enormously by discipline and are so far removed from expressing public value they are very limited in usefulness.

An initiative aiming to shift assessment of research performance from numerical assessment to peer review is the San Francisco Declaration on Research Assessment (Dora). Its objective is to transform how research quality is understood, creating a culture where numerical measures are no longer the primary determinant of promotion and research funding.

In a global groundswell, Dora has been signed by more than 15,000 individuals and 1,800 institutions since it was launched in 2012. The University of Melbourne has now become the first university in Australia to sign up, following the likes of the Australian Academy of Science and the Australian National Health and Medical Research Council.

Numerical measures are popular with decision-makers because they give the impression of objectivity and are easier and faster than a deeper scrutiny of the research by experts. But we should always bear Goodhart’s Law in mind: “When a measure becomes a target, it ceases to be a good measure.”

Economists who study research metrics find that scholars are hesitant to embark on bold or risky research in case they are unable to produce papers able to boost their citation counts (h-index) and/or be published in journals with high impact factors (a measure initially designed not to measure research quality but to market journals to librarians).

The second problem with numerical measures is that they disadvantage academics who take time out raise a family or to focus on teaching. They also favour those who publish large volumes of minor work, describe a widely used method or technology, or write many review articles, over those who publish deep investigation on difficult problems that have impact over the long term.

Numerical measures are immature as reflections of the best interdisciplinary research and fail to capture some of our most important research: work that improves clinical practice, reforms social policy or generates research sets and software for others to use.

Our challenge is to disentangle research quality from numerical measures that reward caution, short-termism and exclude certain types of research and researcher. For instance, the UK’s national Research Excellence Framework explicitly does not use journal impact factors to understand either research quality or research impact. In Australia, we have a similar strategy with the government’s research and impact agenda and the NHMRC’s “statement of impact”, introduced as part of grant applications.

Australia’s chief scientist, Alan Finkel, has called for the introduction of the “rule of five”. That would require researchers seeking jobs, promotion or funding to present their best five research papers from the past five to 10 years, accompanied by a description of the research, its impact and how they contributed to what is often a team effort.

The Walter and Eliza Hall Institute in Melbourne and the Frances Crick Institute in London are both supporting researchers by removing constraints imposed by the need to publish to get the next job or gain another three-year grant by instituting seven-year appointments for new lab heads.

The biggest problems of our time – emerging diseases, statelessness, climate change, social equity and new energy sources, to name but a few – need the attention of our best research minds, unconstrained by simplistic measures of performance and success.

A more holistic judgment of the quality and impact of research, informed but not driven by metrics, will instil greater confidence in our research community to take the risks needed to break down paradigms and change the world.

Author Bio: Jim McCluskey is deputy vice-chancellor (research) at the University of Melbourne.