Each year, the rankings of universities flourish, causing the same stir as the publication of major gastronomic guides. Who will be in the “top 50”? Have we made progress? Where are the neighboring universities (friends, nonetheless competing)? These questions stir the heads of higher education institutions.

In fact, these rankings would hold larger global stock markets that are the Dow Jones or the CAC 40 than the Michelin or Gault-Millau. Indeed – because this is the dominant model – universities are evaluated according to their products (training and research results), the quality of their staff and their ability to attract funding, which results in the quality (intellectual and financial) and the quantity of the clientele (understand the students) who will cross the doors of the establishment.

In this large education market, rankings come periodically to draw a map of multi-purpose education for students, researchers, university officials and policy makers.

It should be noted from the outset that they are not the only benchmarks, since the number of labels and accreditations of all kinds, often specialized in fields, which certify the quality of teaching in certain sectors, is increasing today. Those who attend the top business schools, such as AACSB or EQUIS , are the best known.

Four key sources

Judgments about rankings in Europe, especially in Latin countries, are often severe. It must be said that, overall, French universities , Belgian, Italian or Spanish, do not shine there.

Three rankings have a global audience:

- Academic Ranking of World Universities (ARWU), Shanghai

- Times Higher Education, The World University Rankings (THE-WUR)

- QS – World University Rankings (QS-WUR)

All measure the quality of research (sometimes teaching) based mainly on bibliometric indices, quantitative data provided by universities, surveys of notoriety and the presence of personalities whose excellence has been recognized by winning prestigious awards (Nobel, Fields Medal).

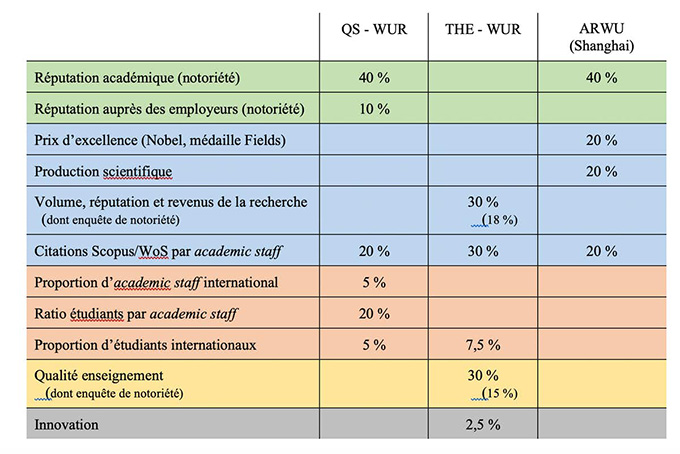

If some criteria are specific to certain rankings, the difference is mainly on their weighting:

Comparative ranking criteria.

Everywhere, we see that notoriety is an important element, weighing heavily on the final result (respectively 50%, 33% and 40%). Next, research is a determining factor in teaching. Finally internationalization is directly taken into account by the QS-WUR, up to 30%.

The research is essentially evaluated by bibliometric indicators ( Web of Science or Scopus ). First reflection, all areas are not covered. The human sciences are less represented; in general, English-language publications have the lion’s share. This is particularly true in the field of science and technology and life sciences.

Secondly, magazines are themselves the subject of a ranking, at the top of which enthroned Nature and Science , whose ranking, largely influenced by major commercial publishers (Elsevier, Springer, Wiley, etc.), is itself even criticized (for example, Scopus is directly controlled by Elsevier, also partner of the QS-WUR!).

Rankings classify universities in bulk, which does not make much sense. As a result, we have seen rankings by domains, which somewhat refines the analysis, without however responding to criticisms that can be made about the use of bibliometric indicators ).

To these three high-profile rankings was added a fourth, of which we speak less, U-Multirank , supported by the European Union. This ranking is openly positioned as an alternative to the traditional rankings which are criticized – quite rightly – for being concerned only with research universities with an international vocation.

U-Multirank does not intend to produce a list of the best universities. Instead, it seeks to situate each institution according to five broad criteria: teaching and learning, research, knowledge transfer, international orientation, and regional engagement.

Risks of drift

Apart from the passing emotion at the time of their publication, what good can the rankings serve? At least four categories of “consumers” can be distinguished here: students, researchers, university officials and policy makers.

If the undergraduate students most often choose their university according to a criterion of geographical proximity, the perspective changes to master’s and doctorate. In fact, the reputation of an establishment, the accreditation of certain courses appear as a guarantee of a quality diploma, which will be recognized and valued on the job market.

Early career researchers are guided in their choices by very similar considerations. Being part of a renowned team is an asset for the construction of the personal CV, a promise also of an easier access to well “ranked” publications. For a teacher-researcher, contributing to the ranking of his institution means actually improving his own ranking. This will lead him to focus on scoring, which can lead to publishing strategies.

Apart from the choice of the magazine (importance of the impact factor), more worrying drifts are emerging. Thus, to increase the number of citations, it is more interesting to publish “guidelines” in a general area or an article on a fashionable theme than to be interested in sharp and original topics that only affect a number limited number of specialists. The pressure of rankings could thus come to condition the choice of research topics.

The teacher-researcher’s interest in looking after his ranking is sometimes motivated by the desire to eventually join a prestigious establishment. A sort of scientific transfer window is thus gradually being set up where valuable researchers try to join renowned institutions, and where medium-sized universities try to improve their rankings by recruiting renowned researchers.

Heads of universities, presidents and rectors can make internal and external use of rankings. Despite the criticisms – justified – that can be addressed to them, rankings are still the indicator of something. They can therefore be used as thermometers. This being so, just as one can be seriously ill with a normal temperature of 36.7 °, so a high temperature does not necessarily indicate (even rarely) the precise nature of the disease.

The ranking invites us to ask questions, without always knowing very well which ones. It will be all the more interesting to do so that it is possible to analyze the trend over several years: stable ranking, up or down.

Externally, rankings are part of the universities’ strategy for forming partnerships, attracting students and funding. It will therefore be strategic to partner with higher ranked universities to benefit from a ripple effect, or at least with institutions located in the same classification area. The analysis of the composition of university networks recently awarded the European university label is indicative of the strategies put in place by the institutions.

Strategy Panel

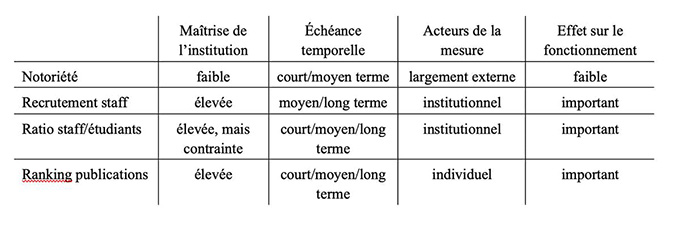

If universities regularly pout at ranking results – especially if they are not at the top of the rankings – most are still forced to worry about them. To do this, one must first understand the parameters of the calculation and evaluate the control that one can have. The table below aims to give an idea of the possible margin of maneuver:

Issues and difficulties of the rankings.

Universities do not control in the same way all the parameters that count in the rankings. For example, the institutions have the hand on the recruitment of the teacher-researchers, much less on the supervision (ratio staff / students) insofar as the financial constraints strongly limit the ambitions, and they have only very little taking on the surveys of notoriety.

An institution can give a strong orientation in terms of publication, but it is ultimately the researchers who will or will not align with this policy. In the latter case, one could witness potential conflicts. Take for example “Open Access”, which aims, among other things, to counter the supremacy (financially unmanageable) of large groups of scientific publishing.

The value of publications in Open Access by an institution could go against the concerns of individuals, concerned about the construction of their personal career, which always passes by the publication in journals with high impact factor, which are controlled by the major publishers ( Rentier 2019 ).

Some measures may be expected to have a fairly rapid effect on rankings, while others, which are more structural, can only produce results in the medium or long term. This is typically the case of recruiting new researchers. On the other hand, notoriety surveys can be influenced by targeted communication from universities and more aggressive lobbying.

Finally, a word to finish on the influence of rankings on policy makers. To the extent that the cumulative rankings of all universities in a country can give a general idea of the national level of research and teaching, governments keep an eye on rankings, even though it is difficult to say their influence on national policies.

In conclusion, despite their low scientific value, it is illusory to imagine that rankings will (quickly) disappear from the academic landscape. Financial interests to promote certain university models, some forms of research are too important to be easily thwarted.

Promoting other types of grading, other forms of evaluation could usefully counterbalance the excessive power of global rankings. But it would take a lot of resources and expertise to do justice to all kinds of activities – not just research – that make a university.

Are we really ready to provide this effort to bring out an original university model, that of a university at the crossroads of science, culture and society? This is a major challenge for the European political class if it is to live up to the expectations of society (see Winand 2018 ).

Author Bio: Jean Winand is Senior Vice-Rector and Professor of Egyptology at the University of Liège