Since Galileo, research in physics has followed a well-marked procedure, structured by three precepts: observation of natural phenomena, conceptualization of a law underlying them, verification of the predictions that result from them.

This approach has borne many fruits and we now know in detail the laws that apply to the world between the scale of elementary particles and that of the global universe. The method which has been masterfully developed over the last four centuries is based on the law of causality and follows the reductionist approach of Descartes : when faced with a problem, it must be broken down into as many stages allowing the construction of simple sequences of reasoning . At each step, determinism applies. If we do not find a causal sequence, it is because we have not sufficiently reduced the problem. This approach is consistent with Occam’s principle of parsimony., according to which one must choose the simplest explanation to understand nature, complexity being only a solution of last resort.

Today, this paradigm seems to be rejected by the fashionable techniques of machine learning (ML), which is translated as machine learning and which is a sub-category of artificial intelligence that is talked about all the time.

Is it a revolution and if so, what can we deduce from it?

Machine learning and neural networks

artificial intelligenceaims to create a machine capable of imitating human intelligence. It is used for the automatic translation of texts, the identification of images, in particular facial recognition, targeted advertising… The objective of machine learning is more specific. It aims to teach a computer to perform a task and provide results by identifying matches in a batch of data. ML writes algorithms that discover recurring patterns, similarities in existing data sets that are then used to interpret new data. This data can be numbers, words, images… ML computer programs are able to predict results without trying to analyze the details of the processes involved.

The technique of neural networks is one of the tools of the method. These are algorithms in the form of a multi-layered network. The first allows the ingestion of the data to be analyzed in the form of a batch of parameters (image of a dog for example), one or more hidden layers draw conclusions from the so-called “training” data previously accumulated (images of thousands of dogs), and the last one assigns a probability to the starting image. As the name suggests, neural networks are directly inspired by the functioning of the human brain. They analyze the complexity by taking into account all the existing correlations, as can the global vision of the eye.

By detecting regularities in a large set of stored data, algorithms improve their performance over time in performing a task. Once trained, the algorithm will be able to find patterns in new data from those it has been fed. But to obtain a satisfactory result, the system must be trained with as extensive a learning set as possible which remains representative and unbiased, and this explains the basic problem of the method: the result depends on the training. Thus, a process will more easily distinguish dogs than wolves if it has been subjected to more images of dogs during learning. A recent case classified a dog as a wolf because it appeared against a white background. Training footage often showed wolves against a backdrop of snowy countryside.

The example of high energy physics

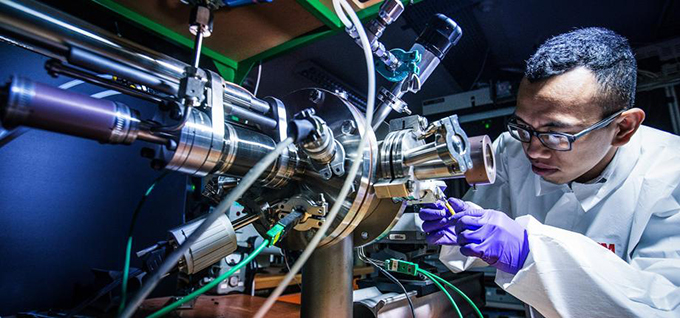

In fundamental research too, the new technique is widely used for the analysis of complex data. It makes it possible to develop, test and apply algorithms on different types of data in order to qualify an event. For example, ML helps physicists manage the billions of proton interactions obtained at CERN’s Large Collider, which discovered the Higgs boson. Neural networks can make data filtering faster and more accurate. Technique improves on its own over time.

This constitutes a break with past methods where we first sought to identify such and such a type of particle among the products of the reaction by applying appropriate selection rules in order to then examine the interaction as a whole.

Here, we directly exploit the overall structure of an event. Thus, for the search for new particles, a theoretical model fixes a phenomenology with its associated parameters. Physicists simulate the creation and detection of these particles. They also simulate the “noise” caused by all the other reactions predicted by the Standard Model , it is up to the machine learning to distinguish the signal sought and the answer is given on a likelihood probability scale.

Yet science cannot blindly rely on ML. Physicists who exploit this revolution must remain in the driver’s seat. For now, a human is still needed to critically examine what algorithmic analyzes deliver. Its role is to give meaning to the results, and to ensure that the data processed is neither biased nor altered. Similarly, a physicist wishing to use an automatic translator from English to French must ensure that the word wave is indeed rendered by wave and not by wave in the expression of the wave-particle duality.

Is physics still deterministic?

Classical physics was intended to be deterministic, it gave a single result to a given problem. The ML method, by its construction, will respond in a probabilistic manner with a possibility of error that we will seek to minimize. By gaining in efficiency and speed of analysis, we abandon certainty to settle for likelihood. We can often be satisfied with it, life itself being probabilistic.

In his time, Einstein opposed the indeterminism inherent in quantum mechanics. He believed that the human brain was capable of completely explaining reality. In this he was following a very respectable prejudice coming from Greek philosophy. In fact, quantum mechanics introduces an intrinsic randomness which violates the a priori of physicists. But this chance remains constrained, it maintains a collective determinism since we know exactly how to predict the evolution of a population of particles. Faced with new developments, it must be admitted that probabilism is becoming an obligatory property inscribed in the research technique itself. Einstein should be rolling in his grave.

Explain to understand?

Classical physics attempted to rationalize the knowledge process by conceptualizing a law whose consequences were verified experimentally. With ML, we always try to predict the evolution of a phenomenon, but the conceptualization phase has disappeared. We draw from the richness of large numbers to define a pattern that we will apply to the problem posed. Building a theory no longer seems necessary to solve a problem. The notions of objective and subjective are mixed.

It was said that physics explains the “how” of natural phenomena, leaving other minds to explain the “why”. Here, it is necessary to review the notion of explanation, the share of pure intelligence spent is erased, or at least, in front of the prowess of the computer, human intelligence no longer serves to improve the computer process. The man puts himself at the service of the machine.

Has physics lost its bearings? I had lost mine and in the face of my confusion, a theoretician scolded me:

“So you believe that gravitons are exchanged between the Sun and the Earth to keep our planet in its orbit? Virtual particles do not exist, they are simple calculation tricks. »

And I understood then that ML had to be accepted as a more elaborate calculation device than those of the past, but that did not seem without consequence to me.

Physics no longer seeks to explain, it is satisfied with a result relevant to a problem obtained with maximum efficiency. But what cannot be explained must be admitted. Pascal had already sensed a limitation of principle in physics; he classified space and time among primitive magnitudes whose reality must be accepted without explanation, because it is “just like that.” Plato with his allegory of the cave had the intuition that we will always only interpret shadows, against the background of a computer memory in the case of ML. And all this recalls the injunction of Saint Augustine who wrote, in an obviously very different context: “We must believe in order to understand. So what can we conclude? In 1989, “the end of history” was announced. The prophecy turned out to be greatly exaggerated,

Author Bio: Francois Vannucci is Professor Emeritus, researcher in particle physics, specialist in neutrinos at Université Paris Cité