Today, everyone talks to machines all day long: from Dragon to Alexa, Siri and voice dictation from your phone, human-machine interaction is more than ever at the heart of our daily lives. . However, this interaction is modulated by many parameters, including how much the machine physically resembles a human.

But understanding how we interact with robots allows us to better understand how we manage interaction with new interlocutors (on the scale of humanity) and always more numerous, and how we integrate them into our society (compared to our other interlocutors such as our children or our pets). Understanding how human-machine interaction works could also help us to guard against potential biases in our interactions with them: believing them to be human , lending them an awareness that they do not have, to cite only examples that resonate with the news.

Speech addressed to machines

“Alexa, how are you today?” Intuitively, you know you won’t pronounce this question the same way whether Alexa is your two-month-old baby, a five-year-old girl, your friend, or your boss. This is called audience accommodation . When we talk to machines, we also adapt our language, and the adaptation depends on the type of machine.

Device -directed speech has several characteristics. We adapt our pronunciation. When we speak to a robot or a vocal speaker, we tend to speak louder, with a deeper, less modulated voice . We also tend to hyper-articulate , that is to say, to take care of our pronunciation. Notably, our vowels will be longer and pronounced more precisely than when we are talking to a human.

Our grammar is also impacted. We tend to make more sentences and make sentences simpler than to adult humans, but more complex than to children. For example, we would use up to ten times more subordinate clauses and also many more passive formulations when talking to a robot than when talking to a child. Thus, we will rather say to the robot “The mouse, which came out of its hole, was eaten by the cat” where we would say to a child “The mouse came out of its hole. The cat ate it. »

At the same time, the gestures associated with speech (known as co-verbal gestures) are also impacted: our hand movements are slower when we speak to a child, and even more so when we speak to a robot, than when we speak. to another adult.

However, this behavior that we have naturally towards machines is not immutable. Most studies have noticed that our behavior changes during the interaction with a machine, even when it only lasts a quarter of an hour, until it looks a little more like the one we reserve for common to our peers.

This evolution of our use can also be related to our habit of interacting with machines. Thus, a 2010 study shows that people who use it for the first time talk to the robot much louder, higher pitched, and more hyper-articulated than to their peers, even if they seem to expect communication skills from robots. developed similar to those of humans.

The more human the robot looks, the more we treat it as such

In a 2008 study , a researcher tested 168 participants interacting with a computer avatar. The avatar could have four degrees of resemblance to a real human: it could have a low resemblance, a medium resemblance or a high resemblance to a human, or finally be the image of a real human. The researcher then observed the social responses of the participants according to whether or not they trusted the avatar during the test, or whether they reported feeling close to him, having deemed him competent, etc. He thus showed that the more the avatar looked human, the more social responses it received from the participants.

More recently, a study by a French team on how to speak to a vocal speaker, to a humanoid robot or to a real human has made it possible to highlight the fact that we do indeed speak differently to a vocal speaker and to an android: we speak higher-pitched and with less variation in voice to the speaker than to the android, and to the android than to the human.

This same study showed that women and men do not behave in the same way: at the end of the interaction, the men end up addressing the robot as they address the enclosure, i.e. -say to another device, where women end up addressing the robot as well as the human. This difference between men and women was also observed in a study where participants spoke to a robot dog.

This linguistic behavior reflects our brain processing of the interaction

In cognitive science, the ability to attribute intentions and desires to others is called “theory of mind”. Theory of mind develops between the ages of 0 and 5, when children understand that the consciousness of others is separate from their own.

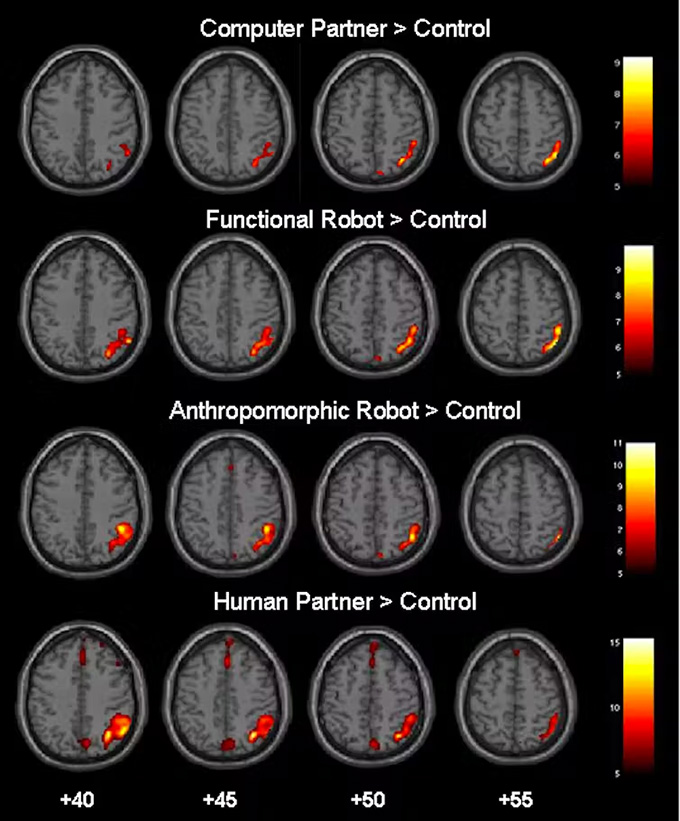

In a 2008 study , a German team showed, using medical imaging (MRI), that the more the partner in the conversation resembles a human, the more the parts of the brain associated with the theory of mind are activated. .

Twenty participants thus played a game with either a computer, or a non-anthropomorphized robot, or a humanoid robot, or a human. It emerged that the more human-like the partner, the more cortical activity the MRI recorded in the frontal cortex as well as the temporoparietal junction, two areas of the brain activated by theory of mind. Participants also reported that the more human the partner seemed, the more competitive and fun they felt.

Compared to a control group, the more humanoid the playing partner (from top to bottom), the higher the cortical activation, as shown by colored areas on four axial brain slices (at +40, +45, +50 and +55) (image from 2008 German study by Krach and colleagues). Krach and collaborators, PLOS One 2008 , CC BY-NC-ND

However, the studies cited (and others ) show that we always make a distinction between a humanoid interlocutor and a properly human interlocutor. The humanoid aspect of the machines therefore invites us to speak to them no longer like machines, but not quite like humans.

Author Bio: Mathilde Hutin is a Researcher in Language Sciences at the Catholic University of Louvain (UCLouvain)