Just a few years ago, we were amused by the completely fanciful photos generated by AI, but today it’s becoming increasingly difficult to distinguish the real from the fake with the naked eye. One idea could be to tag AI-generated photos. This solution could be effective, but it still has many limitations.

In 2024, deepfake videos joined the arsenal of scammers, mimicking the face and voice of a leader to better deceive their employees. They are now making their way into politics. Catherine Connolly , a candidate in the Irish presidential election, was stunned to discover a viral video in which she announced her withdrawal from the campaign. Artificial intelligence (AI) is being used to further the fantasies of supporters of Hungarian Prime Minister Viktor Orbán to discredit Péter Magyar , his main opponent in the parliamentary elections.

Detecting the artificiality of an image or video has become a democratic imperative and an urgent matter. Led by the GAFAM companies and the BBC, the international coalition C2PA , composed of technology companies, press groups, and media outlets, proposes a metadata model to identify the nature and origin of web content.

Building Trust in the Age of AI

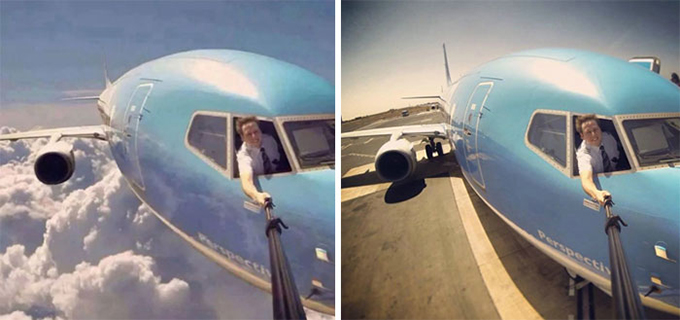

Early promptographies (AI-generated images) were notorious for their aberrations, such as six-fingered hands or paperclips as tall as skyscrapers . But, as photographer Niels Ackermann states when comparing the performance of image generators between 2022 and 2024: “Two years ago, we were still snickering, counting the extra fingers or teeth in mouths […]. That’s over!”

The progress of DALL-E, Midjourney, and their imitators is rapid, and it’s becoming almost impossible to distinguish their work from photographs with the naked eye. In the world of video, cloned faces move almost like the originals, lip movements follow the texts they are given, and voice cloning imitates timbres, rhythms, and intonations. This opens the door not only to exciting new creations but also to the production of increasingly sophisticated deepfakes .

The situation is a source of unease for web users who fear fake news, while press and communication companies fear losing the trust of their users or customers if artificial images creep into their productions.

“Nearly 9 out of 10 consumers worldwide want to know if an image was created using AI,” according to the report “Building Trust in the Age of AI,” compiled in May 2024 by the image agency Getty Images using data collected from thousands of consumers around the world. This echoes the conclusion of the March 2024 Ifop Opinion survey : “Nine out of ten French people would support requiring a label indicating the artificial origin of content on deepfakes.”

The European regulation on artificial intelligence ( AI Act ) comes into force in August 2026. Chapter IV of this regulation is dedicated to the obligation of transparency, meaning that providers and deployers of systems must report content produced or modified by AI (Article 50, paragraphs 2 and 4). It also specifies the form in which this information must be available, referring to results “marked in a machine-readable format” and information “provided to the data subjects in a clear and distinct manner no later than the time of the first interaction or first exposure” (Article 50, paragraph 5). The information must therefore be, on the one hand, readable and displayable by software and, on the other hand, readable by a human as soon as the content in question is displayed.

In 2021, six companies from the media, electronics, and digital document authentication sectors (Adobe, Arm, the BBC, Intel, Microsoft, and Truepic) founded the Coalition for Content Provenance and Authenticity (C2PA). Their goal is to restore market trust in AI by developing a common method for marking digital files. C2PA promises users to display how and when the photo, video, audio, or text they are viewing was produced, what software was used to modify it, and the nature of those modifications.

The principle is based on metadata, which is information of all kinds about a piece of content. For example, for a digital photo: the date, the photographer, and the location of the shot, the type of camera used, and the name and version of the editing software. This metadata is generated by content publishers’ applications, cameras, or other devices, whether or not they rely on AI. This metadata, embedded in the files, is updated with each new modification to the content, even if it is made with different software. C2PA’s solution relies on Content Credentials, a standard and open metadata format, the specifications of which are published on the C2pa.org website . To resist falsification, this data is encrypted using a digital signature system that guarantees its origin.

The coalition is underway

But to be effective, the system must be adopted by as many publishers and content producers as possible in order to marginalize files lacking this authentication metadata (much like a consumer would avoid a product that doesn’t display a Nutri-Score). Once widely disseminated, Content Credentials will also act as a transparency label.

The goal is to attract as many influential companies as possible to the coalition, and the results are promising. In 2022, one year after its creation, C2PA had 200 international members. In February 2024, Meta, OpenAI, and Google joined the coalition… and became members of its steering committee. Among media and communications groups, Publicis, Springer Nature, Reuters, RAI, Die Zeit , and The Wall Street Journal followed the BBC’s lead, and at the end of 2025, France Télévisions announced it had launched its trials integrating the standard for authenticating its newspapers. By 2025, C2PA boasted a community of over 5,000 members .

The first Content Credentials applications are on the market

Since 2024, Adobe has been rolling out Content Credentials in its image processing applications, including the popular Photoshop and the Firefly text-to-image generator. In November 2025, OpenAI announced that content credentials would be automatically applied to all images generated by DALL-E 3, GPT-image-1, and the SORA 2 generative audio and video model.

The “inspection tool” war is on. In 2026, on Pixel10 smartphones running Android 8, the Google Photos app displays the “Content Credentials pin,” “Cr,” indicating the presence of authentication metadata in downloaded images. The Adobe Content Authenticity platform is functional in beta. A Lense verification extension, exclusively for the Chrome browser, is available on app stores.

At the beginning of 2026, the tests I performed to illustrate this article were rather laborious: images containing the valuable metadata are still rare on the web. It was therefore necessary to generate a test image with DALL-E 3 (integrated into ChatGPT), which only produces C2PA metadata when explicitly requested. However, Adobe’s inspection tool then valiantly displayed the metadata and detected the OpenAI signature. On the other hand, the test performed with the Lense extension for Chrome failed.

Author Bio: Claire Scopsi is Professor of Information and Documentation Sciences at the National Conservatory of Arts and Crafts (CNAM)