Last Saturday night, a young woman out on the town in Brisbane saw a dog-shaped robot trotting towards her and did what many of us might have felt an urge to do: she gave it a solid kick in the head.

After all, who hasn’t thought about lashing out at “intelligent” technologies that frustrate us as often as they serve us? Even if one disapproves of the young woman’s action (or sympathises with Stampy the “bionic quadruped”, a model also reportedly used by the Russian military), her impulse was quintessentially human.

As artificial intelligence and robotics are increasingly deployed to spy on and police us, it may even be a sign of healthy democracy that we’re suspicious of and occasionally hostile towards robots in our shared spaces.

Nevertheless, many people have the intuition that “violence” towards robots is wrong. However, as my research has shown, the ethics of kicking a robot dog are more complicated than might be expected.

Robots feel no pain – but what about the people around them?

Were robots ever to become sentient — capable of thinking and feeling — then it would be just as wrong to kick a robot dog as it was a real dog, or maybe even a human being. But the robots we have today are just machines and feel nothing, so kicking them cannot be wrong because it hurts the robot.

Moreover, we still don’t know what makes us conscious and have no idea about how to produce sentience in a robot. So for the foreseeable future we don’t need to worry about causing robots themselves to suffer.

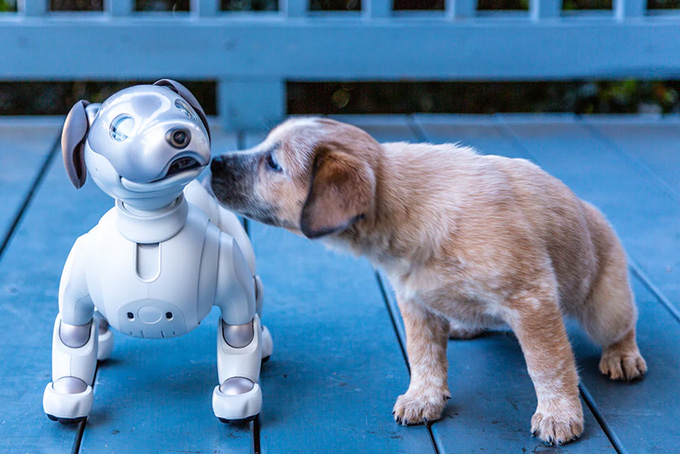

Humans aren’t the only ones who react strongly to robotic dogs. Michael Reichel / AP

One obvious reason to criticise those who damage robots is that the robots are often the property of another person, who may well be dismayed when their robot is damaged. This fails to distinguish damaging robots from damaging cars or bicycles, and cannot explain why we might feel disturbed when we see someone abusing a robot they own.

That other people would feel upset when they saw me kicking a robot dog gives me some reason not to do it. But it’s not a very powerful reason, since some people may be upset by anything I do, including some things that are clearly the right thing to do.

Is kicking robots a gateway to ‘real’ violence?

Some philosophers have argued violence towards robots is wrong because it makes it more likely the perpetrator, or perhaps witnesses, will behave violently towards entities that can suffer. Abuse of robots may lower the barriers to abuse of humans and animals.

This line of argument, which has also been rolled out to criticise “violent” video games, was actually developed by the 18th-century German philosopher, Immanuel Kant, to explain why (he thought) cruelty to animals is wrong.

Kant denied that animals themselves were worthy of moral concern but worried that people who abused animals would develop “cruel habits”. These habits would cause them to behave badly toward those who do count according to Kant – human beings.

How we treat robots that represent people and animals might therefore have implications for how we treat the things they represent.

It’s hard not to feel the appeal of this line of thought. After all, the advertising industry is built on the idea that getting people to associate representations of things or actions with pleasure can change their behaviour. So perhaps someone who enjoys kicking a robot dog may be more likely to kick a real dog in the future.

The problem with this argument is that it often doesn’t bear out in real life when we look at the evidence.

For instance, the claim that playing “violent” video games makes people more likely to be violent in real life is highly contested. Most people can distinguish pretty clearly between fantasy and reality, and may be able to enjoy representations of violence while still abjuring real violence.

What kind of person would do that?

An alternative line of criticism of violence towards robots, which I have developed in my own work, focuses on what our treatment of robots expresses here and now, rather than on how it might affect our behaviour in the future.

How we treat robots may say something about how we feel about the things that the robots represent. It may also say something about us.

To see this, imagine you meet someone who treated “male” robots well but “female” robots badly. This pattern of behaviour looks obviously sexist.

Or imagine you find your ex laughing with glee while they beat a robot made in your image with a baseball bat. It would be hard not to think this said something about how they feel about you.

It doesn’t matter whether these actions make the people who perform them more likely to behave badly in the future. The actions express attitudes that are morally wrong in themselves.

As Aristotle argued in The Nicomachean Ethics, one way to decide how we should act is to ask: “What sort of person would do that?”

When we think about the ethics of our treatment of robots, we should think about the sort of people it reveals us to be. That might be a reason to control our tempers even in our relations with machines – or to give military and police robots in public streets the boot.

Author Bio: Robert Sparrow is Professor, Department of Philosophy; Adjunct Professor, Centre for Human Bioethics at Monash University