These days it is common to complain that, given the general concern for minorities and the assertion that social justice should concern everyone, ideology dominates science. Some even go so far as to compare the current research system to Lyssenkism , an erroneous approach to plant genetics promoted by Soviet and Chinese authorities.

This is the case of an article published on April 27 in the Wall Street Journal , “The hurtful idea of scientific merit” – (The “hurting” notion of scientific merit), written by the scientists Jerry Coyne – an eminent biologist from evolution, author of the important book Why Evolution is True?– and Anna Krylov. Institutions and journals, they argue, have forgotten “scientific merit” and replaced it with ideology, fearing that the so-called “wokists” will be supported by governments and official agencies in the same way as Trofim Lysenko’s false theory of the inheritance of acquired characters was implemented by Stalin. If true, this is terrible news, because in the USSR the ideological supremacy of Lysenkoism led to numerous executions and exiles.

On many occasions, the “anti-woke” have made similar criticisms. An example in the humanities was the exposure of the disclosure of poet Ted Hughes’ family connections to slavery . In psychology, the introduction of the notion of “white privilege” has been criticized .

The delicate concept of scientific merit

Coyne and Krylov talk about biology, but one could easily agree that the controversies over so-called wokism, social justice and truth concern the whole of academia, which includes the natural sciences, the social sciences, the sciences human and the law. Their statement is meant to apply to academia in general, and ‘scientific merit’ here is synonymous with ‘academic merit’.

But this notion of “scientific merit”, sometimes called “scientific excellence” in French research evaluation policies , is obscure. In the absence of a reliable method to measure it, invoking it is a meaningless assertion. Worse still, the way in which merit itself is used by institutions and policies ultimately proves to be far more harmful to science than any radicalized “social justice warrior ideology”, if at all this expression makes sense.

In academia, “merit” means being credited with a solid and measurable contribution to science. Yet when a discovery is made or a theorem proven, it is always on the basis of previous work, as an exhaustive reconstruction reminded us.of Rosalind Franklin’s role in the world-acclaimed discovery of DNA (1953) by Crick and Watson, who received the Nobel Prize for it despite Franklin having died four years earlier. The attribution of credit is therefore complicated by the inextricability of causal contributions, which makes the notion of “intellectual credit” complex, as does the very idea of an “author”, to whom this credit is in principle due. As in a football or handball team, analyzing the contribution of each player to the goal scored by the team is not an easy task.

Social conventions have therefore been invented to overcome this quasi-metaphysical underdetermination of the “author” (and therefore of his merit). In science, one of them is the discipline : being an author is not the same thing in mathematics as in sociology, and the disciplines determine what is required to sign an article, and therefore to be an author. in a given area. Another conventional tool is citation : the more a person is quoted, the higher their merit.

The ranking of quotes is therefore meant to reflect the true greatness of individuals. To estimate it, it is necessary to list all the articles signed by an individual and cited by his peers, which gives rise to measures such as the impact factor (for journals) or the h-index (for scientists). , which form the basis of our merit system in science, since any assessment of a person’s academic merit and their chances of being hired, promoted or funded in any country juggles these combined numbers. As the Canadian sociologist Yves Gingras said, while the “article” has been a unit of knowledge for four centuries, it is now also a unit of assessment and is used daily by hiring committees and funding agencies around the world.

Contrary to what Coyne and Krylov claim, the latter intend to find the most deserving scientists by tracking the number of citations and publications — the latter increasing the number of citations, since the more you publish articles, the more your work will be cited. Hearing that China is now the leading publishing nation and worrying about its impending victory in the publishing race, as we read about every day, only makes sense if one equates the value of science with these great measures.

Where science loses in the idea of merit

Yet measuring scientific merit in this way undermines the quality of science for three reasons that have been analyzed by the scientists themselves. The overall result is that this type of measurement produces “natural selection for bad science,” as evolutionary biologists Paul E. Smaldino and Richard McElreath put it in a 2016 paper . For what ?

First, it’s easy to play around with measurements — for example, splitting an article in half, or writing another article by just changing the parameters of a template. Clearly, this strategy unnecessarily increases the amount of literature that researchers must read and thus increases the difficulty of distinguishing signal from noise in a growing forest of academic papers. Shortcuts such as fraud or plagiarism are also encouraged; it’s no wonder, then, that scientific integrity agencies and research misconduct trackers have proliferated.

Second, this measure of merit induces less exploratory science, because exploration takes time and risks finding nothing, so your competitors will reap all the benefits. For the same reason, journals will favor what conservationists traditionally call exploitation over exploring new territory , since their impact factor is based on the number of citations. A recent article in Nature argues that science has become much less innovative over the past decade as bibliometric-based assessments have flourished.

Finally, even if one wishes to retain a measure of merit linked to publication activity, merit based on bibliometrics is one-dimensional because real science—as revealed by its computer-assisted quantitative study—develops as an evolving landscape rather than linear progress. Thus, what constitutes a “major contribution” to science can take many forms, depending on where you are in this landscape.

Rethinking scientific progress

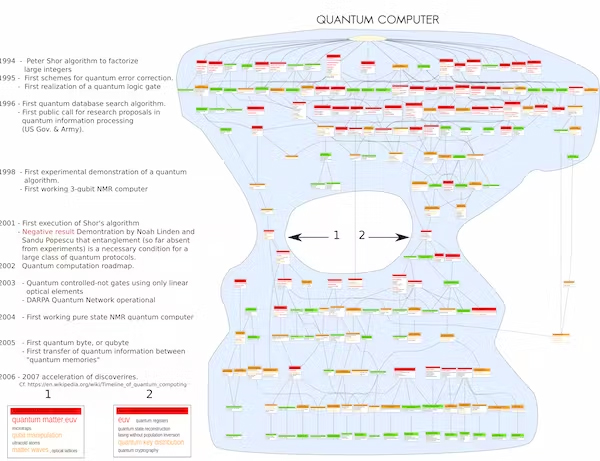

At the ISC-PIF (Paris), researchers have mapped the dynamics of science by detecting over the years the emergence, fusion, fission and divergence of topics defined by groups of correlated words (such as illustrates the figure below relating to the field of quantum computing, where the fissions and fusions that have occurred in the history of the field are graphically visible). It appears that the types of work carried out by scientists at distinct stages of the birth, growth or decline of a field (considered here as a set of subjects) are very different and give rise to incomparable types of merit.

Progress over time in the field of quantum computing. Author provided

Thus, when a domain is mature, it is easy to produce many articles. On the other hand, when it is emergent — for example by the splitting of a domain or the merger of two previous domains — publications and audiences are rare, so that it is impossible to produce as many articles as a competitor working in a more mature field. To level everything by the common reference to citation numbers—however refined the measurements—will always miss the proper nature of each specific contribution to science.

Whatever the meaning of the word merit in science, it is multidimensional, and therefore all indexes and measures based on bibliometrics will not take it into account because they will turn it into a one-dimensional number. But this ill-defined and ill-measured merit, as the basis for any assessment of scientists and hence the allocation of resources (posts, grants, etc.), will help shape the face of academia and thus corrupt science further. firmly than any ideology.

Therefore, the claim of merit as currently assessed is not a gold standard for science. On the contrary, this “merit” is already known to reinforce a deleterious approach to the production of knowledge that involves many negative consequences for science and researchers alike.

Author Bios: Philippe Huneman is CNRS Research Director, Institute of History and Philosophy of Science and Technology at the University of Paris 1 Panthéon-Sorbonne and David Chavalarias is Research director, Center for Social Mathematics Analysis (CAMS), School of Advanced Studies in Social Sciences (EHESS) at the National Center for Scientific Research (CNRS)