Each year various magazines and newspapers publish college rankings in an attempt to inform parents and prospective students which colleges are supposedly the best.

U.S. News & World Report’s “Best Colleges” – perhaps the most influential of these rankings – first appeared in 1983. Since then, many other rankings have emerged, assessing colleges and universities on cost, the salaries of graduates and other factors.

For example, The Wall Street Journal and Times Higher Education recently released their new rankings, which judge colleges on things that range from how much graduates earn to the campus environment to how much students engaged with instructors.

But what, if anything, do all these college rankings really reveal about the quality and value of a particular college?

In order to provide a new perspective on rankings, my colleagues Matt I. Brown, Christopher F. Chabris and I decided to rank colleges according to the SAT or ACT scores of the students they admit. We approached this matter as researchers with backgrounds in education and psychology.

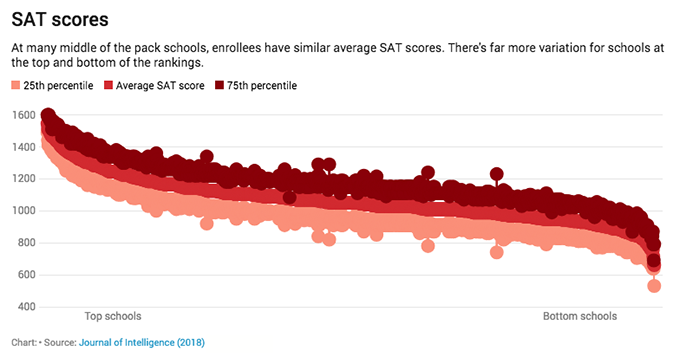

For our analysis, we took data from the 2014 U.S. News rankings and recorded the 25th and 75th percentile scores on the math and verbal subtests for 1,339 schools. We took all the ACT scores and converted them to SAT scores using a concordance table. Then, we simply ranked all the schools by this standardized test score metric.

Hierarchy of smarts

One thing we discovered is that schools higher up on the rankings generally admit students with higher SAT or ACT scores. In other words, what the rankings largely show is the caliber of the students that a given college admits – that is, if you accept the SAT as a valid measureof a student’s caliber. Though there is often public controversy over the value of standardized tests, research shows that these tests are quite robust measures to predictacademic performance, career potential, creativity and job performance.

Critics of the SAT might say it actually tests for students’ wealth, not caliber. While it is true that wealthier parents tend to have students with higher test scores, it turns out the research robustly shows that test scores, even when you consider socioeconomic status, are predictive of later outcomes.

Our ranking also disproves the notion that the No. 1 school in the land is slightly better than the No. 2 school – and so on down the list. Rather it shows that the vast majority of schools admit students who earn a score between 900 and 1300 on the SAT – that is, on the combined scores on the SAT Math and Verbal. Greater variations in test scores appear in schools that admit students at the low and high end of the distribution – those students who earn below a 900 or above a 1300 on their SATs.

SAT scores

At many middle of the pack schools, enrollees have similar average SAT scores. There’s far more variation for schools at the top and bottom of the rankings.

25th percentile Average SAT score 75th percentile

In particular, most of the variation occurs between “highly selective” and “elite” schools, between the scores of 1300 and 1600 in the illustration. Thus, test score rankings can mean different things depending upon which group of schools students and parents are considering. For example, if you are deciding whether to attend two different schools that fall into the vast middle range of scores where there is much more overlap, the ranking differences likely will not tell you very much.

To our knowledge, our graph represents the first illustration of how colleges and universities stack up against one another in terms of the SAT or ACT test scores of the students that end up on their campuses

For instance, The Wall Street Journal-Times Higher Education rankings methodology does not include the SAT/ACT scores of students. The U.S. News rankings include SAT/ACT scores as part of their student selectivity portion, but these scores are weighted only about 8 percent in the total formula.

Different rankings, similar results

Our study also assessed the correlation — or how statistically similar — our test score rankings were compared to the U.S. News rankings themselves, as well as other rankings that are meant to assess entirely different dimensions of colleges and universities.

A correlation of 1 indicates a perfect relationship between two variables whereas a correlation of 0 indicates no relationship between two variables. We found across our analyses that test score rankings correlated between 0.659 to 0.890 with other rankings. This suggests the schools that end up at the top of the test score rankings also will end up at the top of these other rankings.

We first found high correlations between our test score rankings and U.S. News national university rank – 0.892 – and liberal arts college rank – 0.890 – even though U.S. News weights these scores only about 8 percent in their formula. Times Higher Education’s U.S. school ranking was correlated 0.787 with SAT and ACT scores and Times Higher Education’s full international school ranking was correlated 0.659. This suggests that the SAT/ACT rankings could function as a common factor that connects all rankings.

But what about other types of rankings that were formulated in very different ways for different purposes?

When we examined the correlation between our test score ranking and a “revealed preference ranking,” which was based on the colleges students prefer when they can choose among them, we found these rankings to be highly related at 0.757.

When we compared the test score rankings to a novel set of rankings created by Lumosity, the creator of “brain games” meant to boost cognitive functioning, we found that ranking to be highly related to SAT/ACT scores as well – at 0.794.

Finally, we examined a “critical thinking” measure – the CLA+ – intended to assess critical thinking among freshman college students. We again found this to be highly related to the test score rankings – at 0.846.

A question of usefulness

The similarities in rankings raises the important issue of what all these rankings actually measure. Do they really measure the value that a college adds to a student’s life? Or are they largely a function of student test scores, which reflects student characteristics and educational development, among other aspects, such as reasoning abilities.

Considering the correlation between SAT scores and college rankings, is it fair for a school to say a parent is getting a good “return on investment” for the tuition they pay? Since student characteristics – as indicated by test scores – are so highly correlated with the rankings, we argue that student characteristics should be considered as inputs when evaluating any outputs of a school. This is because schools that admit students who score well on the SAT or ACT will also have successful graduates based on the research that shows standardized tests alone predict many long-term outcomes.

Schools may want to take as much credit as they can for the education and opportunities they give students. But if a school enrolls the top students to begin with, it’s hardly surprising that such a school would end up on top in terms of other outcomes. A college’s success may be less about the quality of its instruction and more about the talent it can recruit.

Author Bio: Jonathan Wai is an Assistant Professor of Education Policy and Psychology and Endowed Chair at the University of Arkansas